Gauge Block Calibration Intervals: Accuracy Standards

Gauge block calibration determines whether your measurement system drifts or stays true. The interval between recalibrations is not arbitrary, it flows from grade selection, usage intensity, environmental stability, and measurement traceability standards that define your shop's capability floor. This article answers the questions that control whether your tolerance stack passes audit or fails inspection.

What Tolerance Grade You Choose Drives Your Calibration Interval

Q: Why does grade matter for calibration interval?

A: Each gauge block grade carries explicit tolerances tied to a standard, ISO 3650 or ASME B89.1.9 in North America. Grade determines both what the block can measure and how often it must be verified.

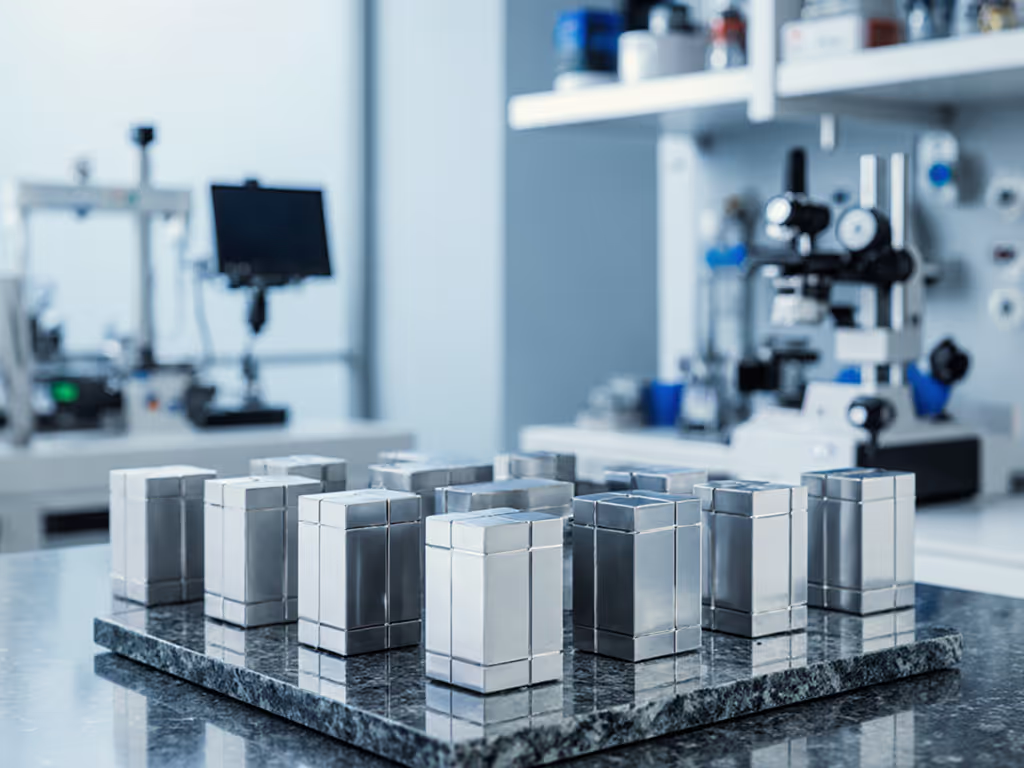

Grade K (Calibration Grade) blocks carry tolerances of ±0.05 µm to ±0.1 µm and are reserved for reference standards and CMM calibration. Grade 0 ranges ±0.1 µm to ±0.2 µm and serves inspection and toolroom applications. Grade AS-1 (workshop standard) allows ±0.5 µm to ±1.0 µm for machine setup and routine part verification. This hierarchy is not cosmetic: tighter tolerance means higher risk of drift, which means more frequent recalibration is demanded.

When you select a grade, you're also selecting a calibration rhythm. If you buy Grade K blocks but treat them like Grade AS-1 (never recalibrating, exposed to temperature swings), you've purchased precision you cannot keep, and your uncertainty budget evaporates.

Q: What does ASME B89.1.9 actually specify for recalibration?

A: The ASME standard recommends, not mandates, recalibration periods for each grade. Grade K typically calls for yearly recalibration; Grade 0 every 1-2 years; Grade AS-1 every 2-3 years; and Grade AS-2 every 3-5 years. These are benchmarks, not laws. Your shop's actual interval depends on handling practices, environmental control, and documented drift history.

Key assumption: these intervals presume blocks are stored in a controlled environment, handled with care, and used within stated tolerances. If your shop floor temperature swings 15°C from morning to afternoon, or if operators use gage blocks as setup gauges and toss them in trays, recalibration intervals compress. I've logged hourly temperature and humidity around our shop's surface plate and discovered drift that looked like block degradation, but was actually environmental creep. Once we invested in environmental control, recalibration intervals stretched, and our CMM finally tracked the micrometers on Monday morning.

Working Standards Implementation and Interval Risk

Q: Should I use one set for reference and another for the shop floor?

A: Yes, and this is where the tolerance-driven pick matters. A best practice: keep Grade K or Grade 0 blocks locked in a controlled lab environment as your working reference. Use Grade 0 or AS-1 blocks on the shop floor for machine setup and in-process gauging. This separation slows drift in your reference set and gives you a stable baseline against which to correlate field data.

When you do recalibrate, you want error bars on those measurements, not just a pass/fail certificate. A calibration lab accredited to ISO/IEC 17025 will provide uncertainty budgets alongside tolerance statements, units, and conditions. That is, the lab reports not just "Block A = 50.0000 mm" but "50.0000 ± 0.0001 mm at 20°C, ±2% RH, using laser comparator with 0.00005 mm resolution." That granularity lets you build a meaningful uncertainty budget for your tolerance stack.

Q: How do I know when drift becomes a problem?

A: Track calibration certificates over time. For example, if a Grade 0 block drifts 0.10 µm between recalibrations, and your part tolerance is ±0.25 µm, you've consumed 20% of your tolerance budget on measurement uncertainty. If drift doubles to 0.20 µm, you're at 40%, and your 10:1 test accuracy ratio (part tol / gage tol) collapses to 5:1. At that point, you either shorten the recalibration interval, upgrade to a more stable grade, or stop using that block for critical work.

Many shops fail to link drift trend to recalibration interval. They recalibrate on a calendar schedule, "Every 12 months," and ignore whether the block is actually drifting 0.01 µm or 0.10 µm per year. If you plot drift over five certificates, you can justify extending intervals (if drift is negligible) or accelerating them (if drift increases). That data conversation, backed by explicit tolerances and units, is what gets management buy-in for environmental upgrades.

ISO 17025 Calibration Requirements and Traceability

Q: What does an ISO/IEC 17025-accredited calibration certificate prove?

A: It proves that the lab's measurement was traceable to a national standard (NIST in the U.S., or equivalent), that the measurement was made under stated environmental conditions (20°C, controlled humidity), and that the lab's own measurement system has known uncertainty. The certificate should list the accrediting body (A2LA or NVLAP in the U.S.) and its accreditation number. If you're setting up or upgrading a lab, see our ISO/IEC 17025 accreditation guide.

For ISO 9001, AS9100, or IATF 16949 compliance, that certificate becomes your audit shield. You must retain it, link it to the block's serial number and asset tag, and ensure the recalibration date has not passed. If a block is out of calibration at the time of a critical inspection, your inspection results become suspect, and auditors will flag rework or scrapped parts.

Q: Why does ISO 3650 differ from ASME B89.1.9?

A: ISO 3650 is the global benchmark. ASME B89.1.9 is the North American standard. Key difference: ISO specifies stricter environmental and surface finish requirements and defines calibration at 20°C (68°F) under controlled humidity. ASME allows slightly looser tolerances at lower grades, making ASME blocks more affordable but less stable for ultra-precision work.

If your shop is purely domestic and your calibration lab is ASME-accredited, ASME blocks suffice. If you ship to international OEMs (aerospace, medical device) or must conform to ISO 9001:2015 global requirements, ISO 3650-certified sets provide interoperability and broader traceability. Again, this is a tolerance-driven pick: specify the standard in your procurement, not just the grade.

Interval Determination in Practice

Q: How do I set a custom recalibration interval for my shop?

A: Start with the ASME or ISO recommendation for your grade. Then document three things:

- Usage frequency: How many measurements per month per block? High-use blocks drift faster from wear and thermal cycling.

- Environment stability: Is your lab air-conditioned to ±2°C, or does it swing 15°C? A controlled metrological room wins you interval extensions, an uncontrolled floor compresses them.

- Drift history: After two or three recalibrations, plot the drift per block. If a Grade 0 block shows <0.05 µm drift per year and your tolerance stack uses it for a ±0.50 µm feature, you have headroom to extend the interval. If it drifts 0.15 µm, accelerate recalibration or demote the block to Grade AS-1 work.

Document all assumptions, temperature range, humidity, storage method, handling rules. This creates repeatable rigor and gives you data to defend intervals to management or auditors.

Q: Can I self-verify gauge blocks between calibrations?

A: No, not for traceability. You can compare blocks to each other (using a comparator or CMM) to detect gross drift or damage, but self-verification is not accredited and does not satisfy ISO 17025 requirements. For compliance and audit confidence, you need third-party, accredited recalibration every interval. Understand the ROI and risk tradeoffs in our calibration services market guide.

What you can do: retain a comparison record (signed, dated, conditions noted). If a block drifts unexpectedly between accredited calibrations, that record proves when you detected it, limiting liability exposure.

Tying Interval to Tolerance Stack and Workflow

The unseen cost of misalignment: choosing a recalibration interval without regard to your tolerance stack and process environment. Shop by tolerance stack, environment, and workflow, or accept drift. For a practical framework, read our tool selection by tolerance guide. If your tolerance calls for ±0.10 µm repeatability and you calibrate blocks every two years with no environmental control, you're accepting 5-10x more measurement uncertainty than the part allows.

The move: audit one critical feature in your shop. Map its tolerance to the gage-block grade needed. Determine the environmental conditions during measurement. Then set a recalibration interval that keeps measurement uncertainty ≤10-20% of the tolerance. Record the reasoning, assumptions, units, conditions, on a calibration log. That discipline spreads across your measurement system and makes audit day calm, not chaotic.

Recalibration intervals are not calendar artifacts. They're engineered intervals tied to grade, environment, and traceability. When they align with your tolerance stack, your measurements stay true.